A look into the APIs in the field

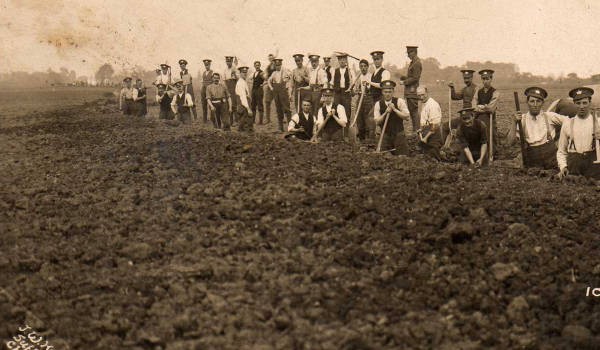

(Image: Photo of men from the 2/5th Battalion Gloucestershire Regiment digging a trench, The Great War Archive, University of Oxford)

What Are These APIs Anyway?

My background is really that of a generic developer, so when I originally read the project brief and saw the word API, I was expecting some sort of programmatic interface accessed via a library like Microsoft’s .net or some other comprehensive API.

Of course, in the discovery context I quickly found that the ethos is more to expose resources to the web via HTTP and access them RESTfully or similar, so making use of the data which is out there boils down to crafting an HTTP GET request, firing it at a server, and then being prepared to read what comes back into your application, where you can do whatever you like with it.

I still had no idea what technologies existed — whether there were off-the-shelf providers, whether people had written their own APIs to fit with their metadata and world view, whether collections management software offered API features for publishing metadata to wider audiences, and so on. The phase one report mentioned that a lot of people had no API, and that some people were “developing” them, which to me implied in-house development, and that we might end up dealing with as many APIs as there are providers who have them! It was a relief when re-reading our project description to find “clear API access mechanisms and documented API standards” in our prioritisation matrix.

The other side of the coin was that our deliverable is our own aggregation API, and I was wondering whether this would entail us thinking up our own API syntax, or even whether or not it made sense to reuse an existing one.

API Features

In my last post, I described the APIs we found out there already, and from the perspective of sources which actually have digital content, you may have noted one in particular cropping up quite frequently — Solr. This sounded promising — a standard, off the shelf product rather than an in-house effort!

Point 3 in our preliminary strategy is to look into functionality provided by existing APIs, so looking into the others, I quickly found that SRU and Opensearch weren’t APIs in themselves, but rather standards or protocols which APIs should follow. OAI/PMH is an API for harvesting whole portions of someone else’s data and has no search capability — making use of OAI sources in our aggregation API would essentially mean that we would have to harvest it and then make it searchable using another product locally.

So, I set off to look at Solr in more detail and see what it was.

Solr Features

Apache Solr is a java servlet which runs from a platform such as tomcat or jetty. It is built on top of Apache lucene, which as far as I can tell does clever things with language and grammar in order to scan through text and identify search terms. It covers the oddities of language so that someone searching for something like “child” sees records which have words like “children,” which a straight string comparison routine would not catch, and it can determine whether or not text falls within a wildcard, occurs as a phrase, or falls within a proximity or a a range if any of these are supplied as a query.

That said, it still took me a while to really ‘get’ how Solr fitted in as an API — I think it is still very much one of those products where if you need it, you know what it is already, and if you don’t need it, it’s not so easy to figure out what it is even for.

So, a quick behind the scenes: You take whatever metadata you have, do some configuration telling Solr what your fields are, and you load it all in. People can then type search strings into the Solr API, and Solr returns records from your metadata which it thinks contain terms which match the searches. To keep things running, you take on board the task of running Solr on a java server somewhere, and reloading your metadata if it ever changes, and in return people can query and extract specific records from however many thousands you have in your collection.

Looking into how Solr works a little further, I can see why it crops up so frequently in the list. It places no restrictions at all on the structure of your metadata — instead you craft your configuration file to tell Solr about what each field is (text to be searched, date, numbers, etc.). Then you can load your metadata in and Solr files it for searching. It will accept XML metadata if you have it, but (and this, I suspect, is it’s strongest selling point from the perspective of ticking the ‘we have an API now’ box) a variety of other formats if you don’t, including plain and simple CSV.

In turns of returning results, it is equally flexible — you can have XML, JSON, or even data structures tailored to a whole host of other programming languages. However, all Solr results are returned with the same underlying data structure — a header section, and then a doc section containing collections of metadata for each hit.

Early Ideas for our own API

Thinking along the lines of point 6 in our preliminary strategy, the number of Solr providers, coupled with standard query and response syntaxes and the ease of getting flat data live means that from our point of view, having our API use Solr syntax might make the most sense:

- The identical syntax would mean that we could just pass any queries sent to our API through to other Solr providers directly

- Identical response syntax would mean we could easily merge all the results.

- People already familiar with Solr wouldn’t need to learn anything new to use our API.

- Providers with no API who wanted to get involved by giving us data could be put into a local instance of Solr as a quick and easy solution for helping them to get their metadata online in an open and searchable fashion

We could then generate mappings to convert our Solr-style queries into a form suitable for any non-Solr APIs we come across, and then reverse-map any responses into Solr format before amalgamating as described above.

Once we had started thinking about our project in this way we realised we had alighted on the idea of federation rather than aggregation, and so I’ll discuss these two approaches in my next post!